// AI Model Interface

interface IAIModel {

function predict(data: string) external returns (string);

function train(trainingData: string) external;

function getAccuracy() external view returns (number);

}

/// @title AI Model Deployment and Management Contract

/// @dev Enables deployment, training, and management of AI models

contract AIModelManager {

event ModelDeployed(address indexed modelAddress, string modelName);

event ModelTrained(address indexed modelAddress, number accuracy);

event ModelPrediction(address indexed modelAddress, string input, string output);

/// @notice Deploys a new AI model

/// @param modelName Name of the AI model

/// @param initialTrainingData Initial training data for the model

/// @return address Address of the deployed AI model contract

function deployModel(string memory modelName, string memory initialTrainingData)

external

returns (address)

{

AIModel newModel = new AIModel(modelName);

newModel.train(initialTrainingData);

emit ModelDeployed(address(newModel), modelName);

return address(newModel);

}

/// @notice Makes a prediction using an AI model

/// @param modelAddress Address of the AI model contract

/// @param input Input data for prediction

/// @return string Prediction result from the AI model

function makePrediction(address modelAddress, string memory input)

external

returns (string memory)

{

IAIModel model = IAIModel(modelAddress);

string memory result = model.predict(input);

emit ModelPrediction(modelAddress, input, result);

return result;

}

}

/// @title AI Model Implementation

/// @dev Basic implementation of an AI model with prediction capabilities

contract AIModel is IAIModel {

string public modelName;

number public accuracy;

mapping(string => string) private predictions;

constructor(string memory _modelName) {

modelName = _modelName;

}

function predict(string memory data) external returns (string memory) {

// In a real implementation, this would use ML algorithms

// Simplified for demonstration

if (predictions[data] != "") {

return predictions[data];

}

return "Prediction result";

}

function train(string memory trainingData) external {

// Training logic would be implemented here

accuracy = 0.85; // Example accuracy

}

function getAccuracy() external view returns (number) {

return accuracy;

}

}

Agent Sandbox

Secure, isolated environments with real-world tools for building production-ready AI agents. Works with any LLM.

Data that drives change, shaping the future

Decentralized, secure, and built to transform industries worldwide. See how our platform enables sustainable growth and innovation at scale.

Our platform not only drives innovation but also empowers businesses to make smarter, data-backed decisions in real time. By harnessing the power of AI and machine learning, we provide actionable insights that help companies stay ahead of the curve.

Built for AI Agents

Enterprise-grade infrastructure designed specifically for the next generation of AI agents and workflows.

Any Model, Any Framework

Use OpenAI, Claude, Llama, Mistral, or your custom models. Works seamlessly with LangChain, LangGraph, and Autogen.

Firecracker Isolation

Each sandbox runs in a secure microVM with full isolation. Protect your infrastructure from untrusted code.

Full Stack Access

Code execution, internet access, file I/O, vector databases, and terminal access—everything your agents need.

Auto-Scaling Infrastructure

Scale from development to production seamlessly. Handle thousands of concurrent sandboxes with ease.

Agent Sandbox Features

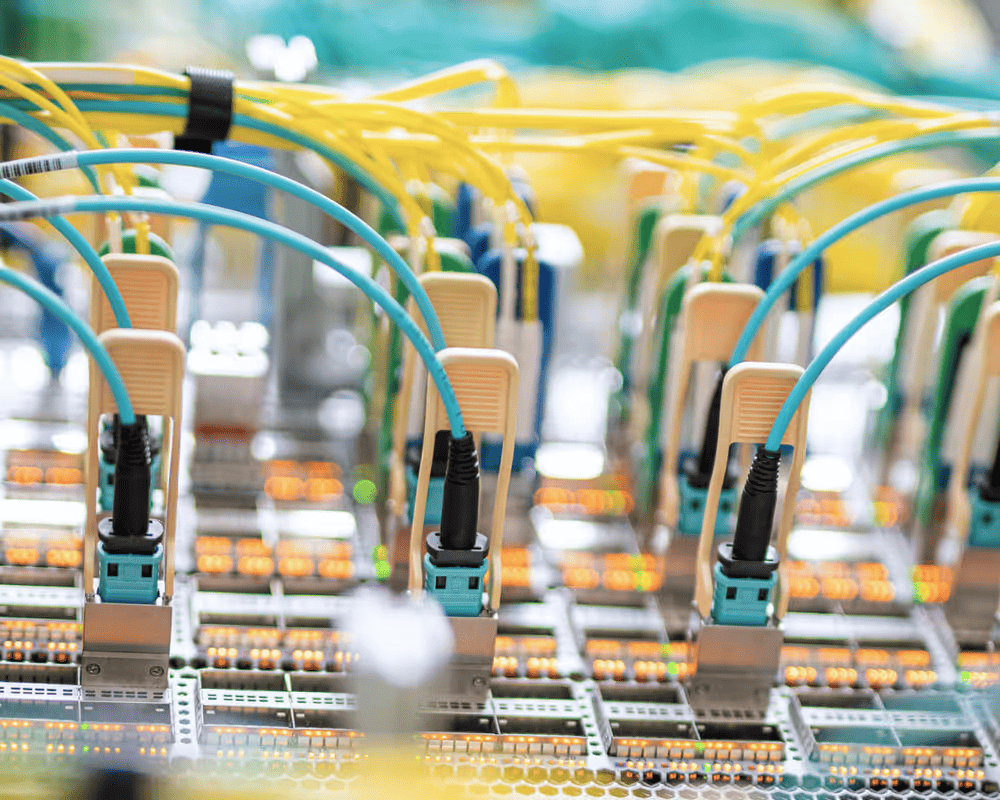

Host your hardware in our enterprise-grade data centers with 24/7 support, redundant power, and advanced security.

-

Multi-Model Support

-

GPT-4, Claude, Llama, Mistral & custom models

-

Secure Sandboxes

-

Firecracker microVM isolation

-

Code Execution

-

Python, JavaScript, shell & more

-

Vector Databases

-

Pinecone, Chroma, FAISS integration

-

Internet Access

-

Web search & API calls

-

Long-Running Sessions

-

Up to 24 hour sessions

Powering the Next Generation of AI

# Research agent with web search and analysis

from medjed_sandbox import Sandbox

from langchain.tools import DuckDuckGoSearchRun

# Create a sandbox for research

sandbox = Sandbox()

# Add web search capability

search = DuckDuckGoSearchRun()

# Run research task

results = sandbox.run("""

Research the latest developments in AI agent architectures.

Find papers from 2024 about:

1. Multi-agent systems

2. Tool use optimization

3. Long-term memory

Summarize key findings.

""")

print(results.summary)Frequently Asked Questions

If you can't find what you're looking for, email our support team and if you're lucky someone will get back to you.

What models are supported?

We support all major LLMs including GPT-4, Claude 3 Opus/Sonnet/Haiku, Llama 3, Mistral, and custom fine-tuned models. Bring your own API keys or use our hosted models.

How secure are the sandboxes?

Each sandbox runs in its own Firecracker microVM with full isolation. No shared resources between sandboxes, and all code execution is contained within the secure environment.

How long can a sandbox run?

Sandboxes can run for up to 24 hours per session. For longer-running tasks, we recommend using our Background Agents feature.

Can I run GUI applications?

Yes! Our Desktop Sandbox feature provides full virtual computer environments with GUI support for tasks requiring visual interaction.

What's the difference between development and production?

The sandbox environment is free for development and testing. Production deployment includes auto-scaling, dedicated resources, SLA guarantees, and enterprise support.